От халепа... Ця сторінка ще не має українського перекладу, але ми вже над цим працюємо!

Mafia&Co: building 4 apps with the same architecture and code-sharing system

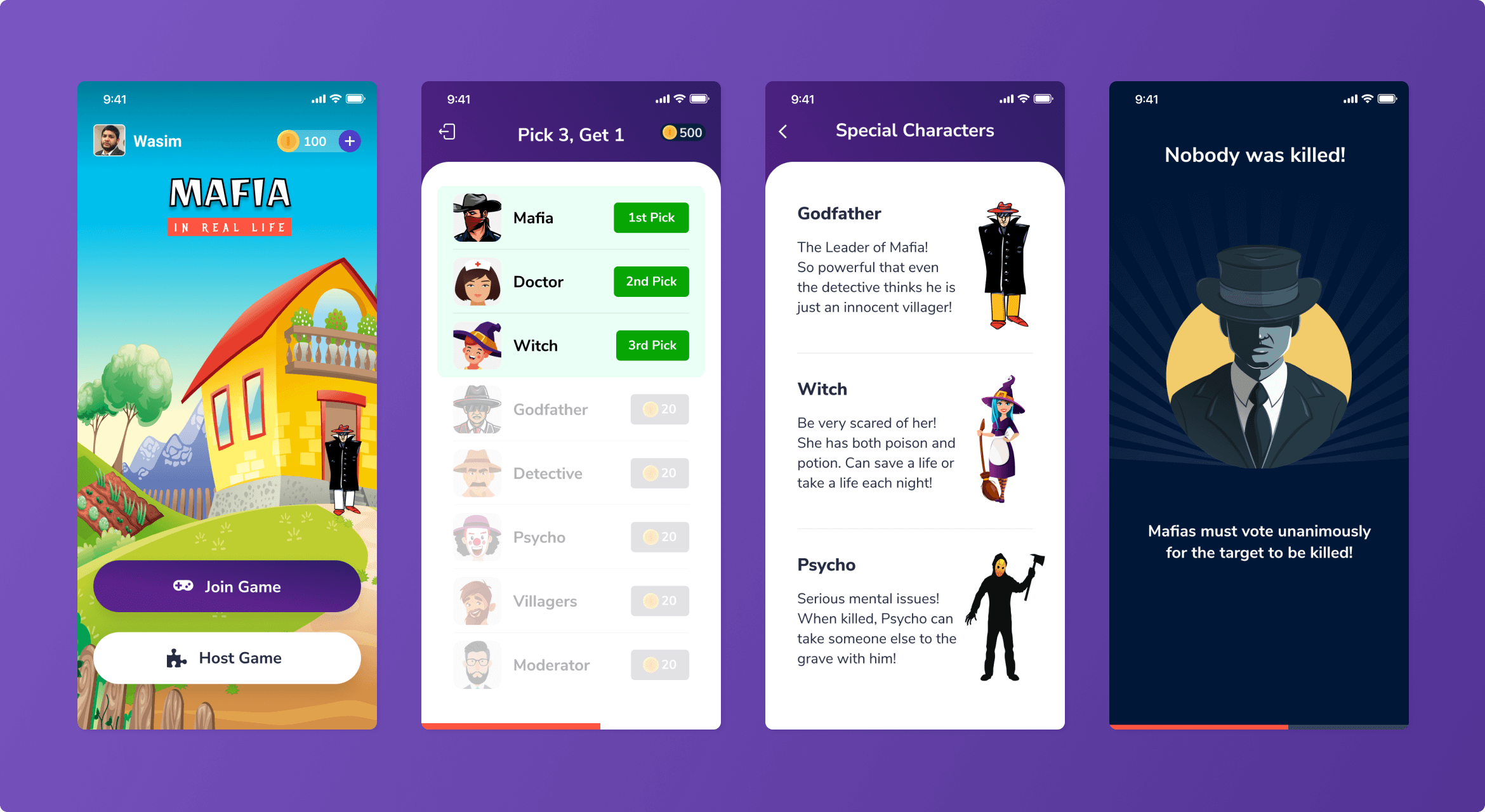

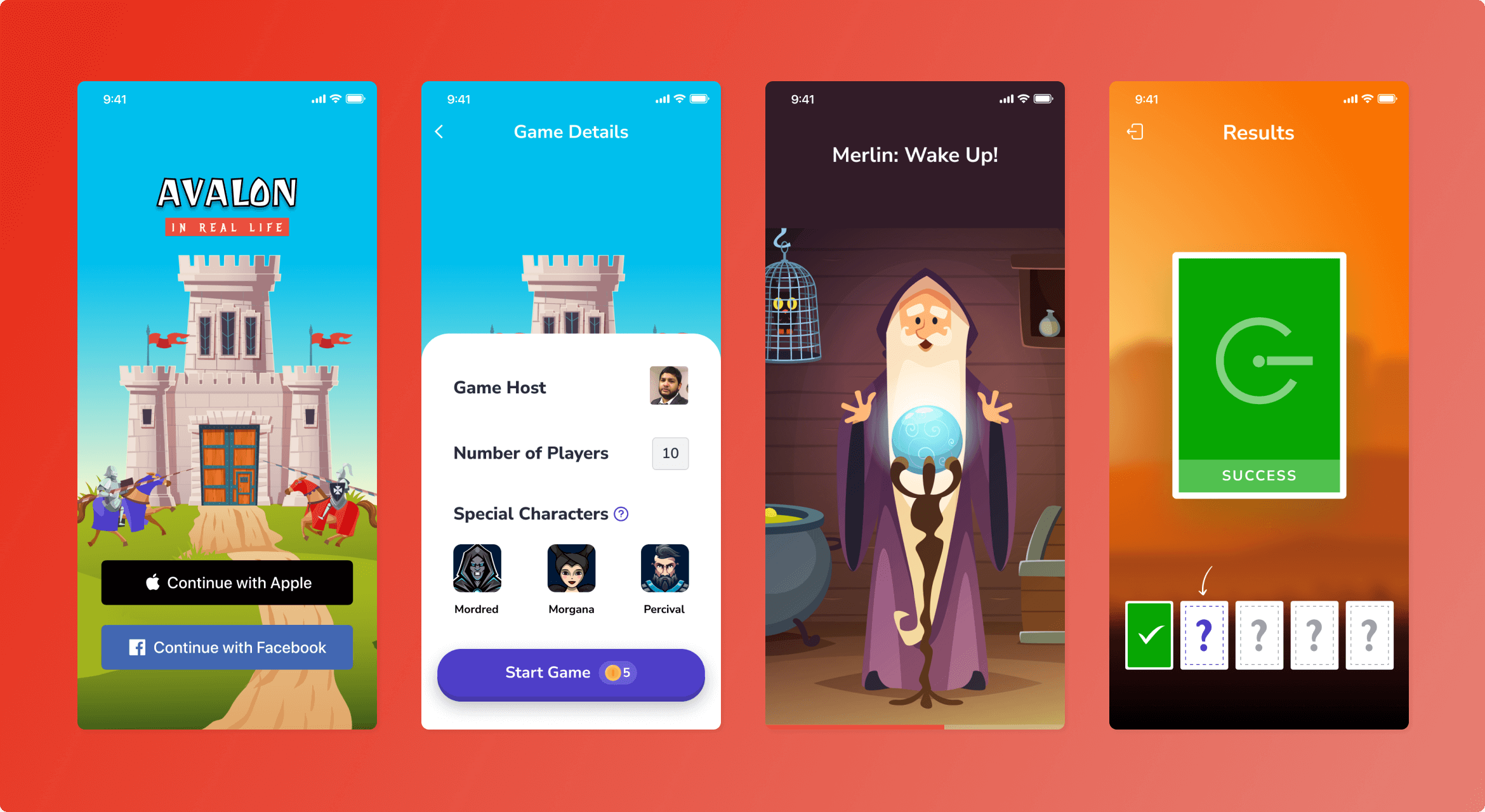

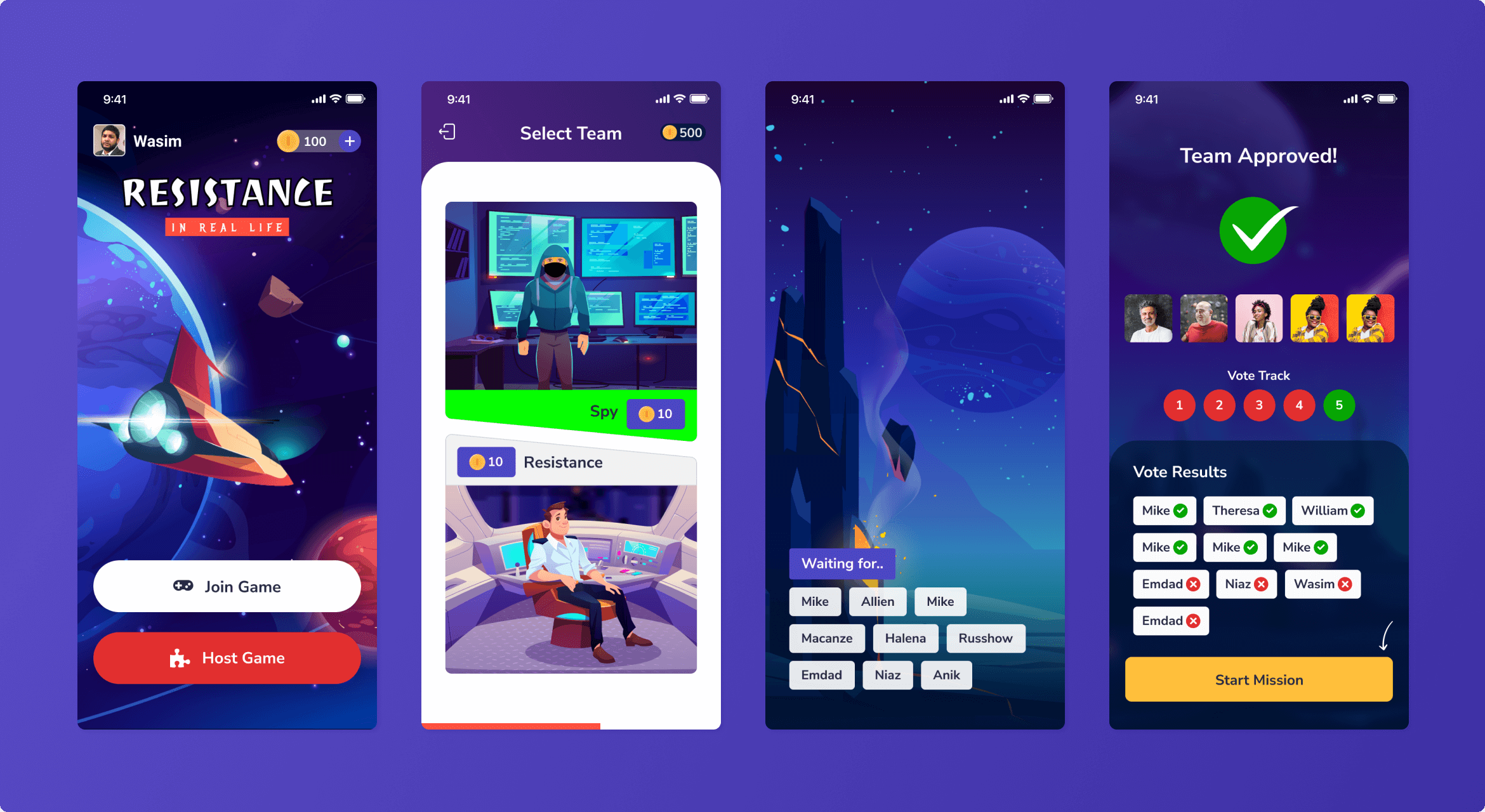

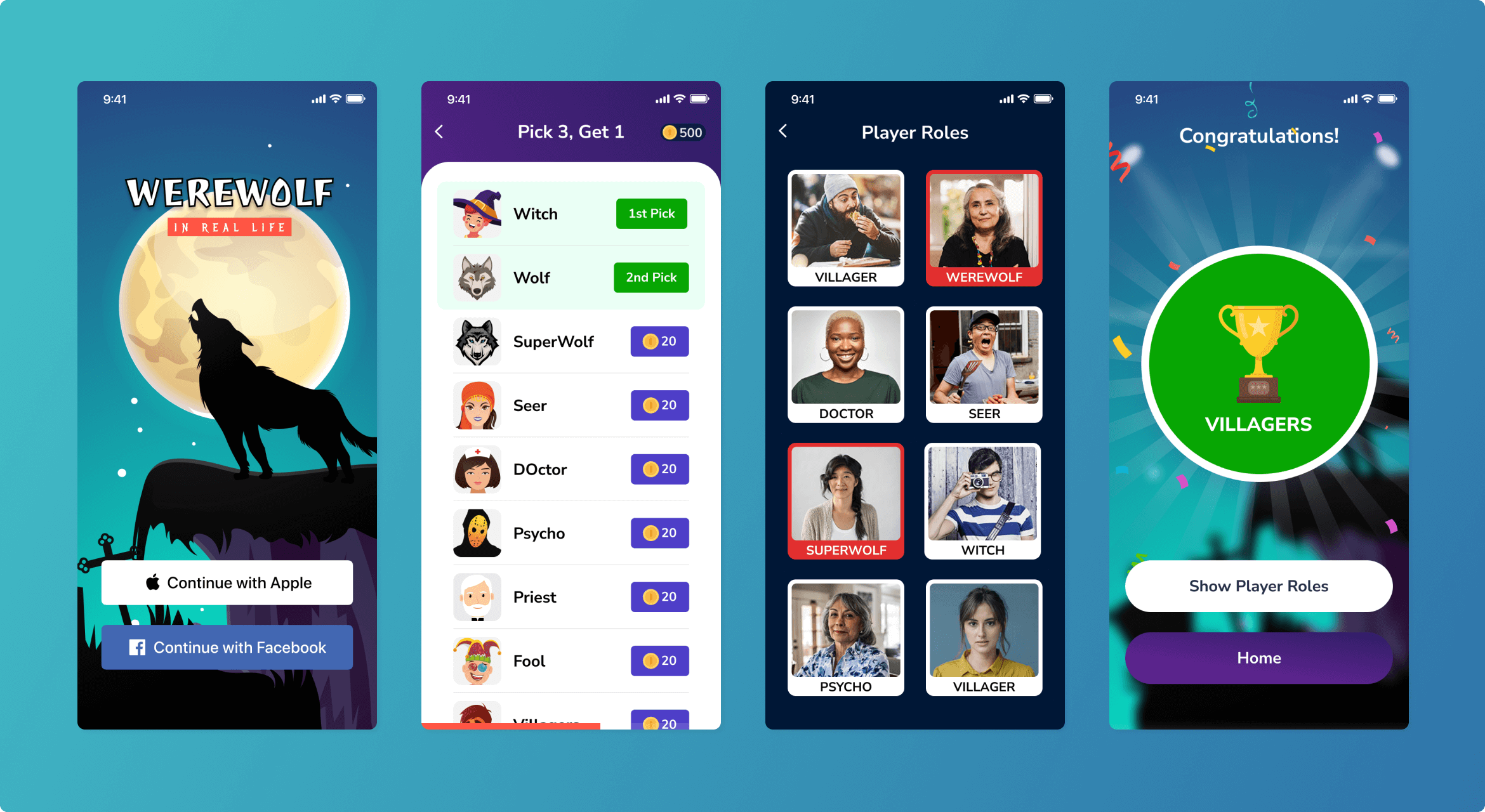

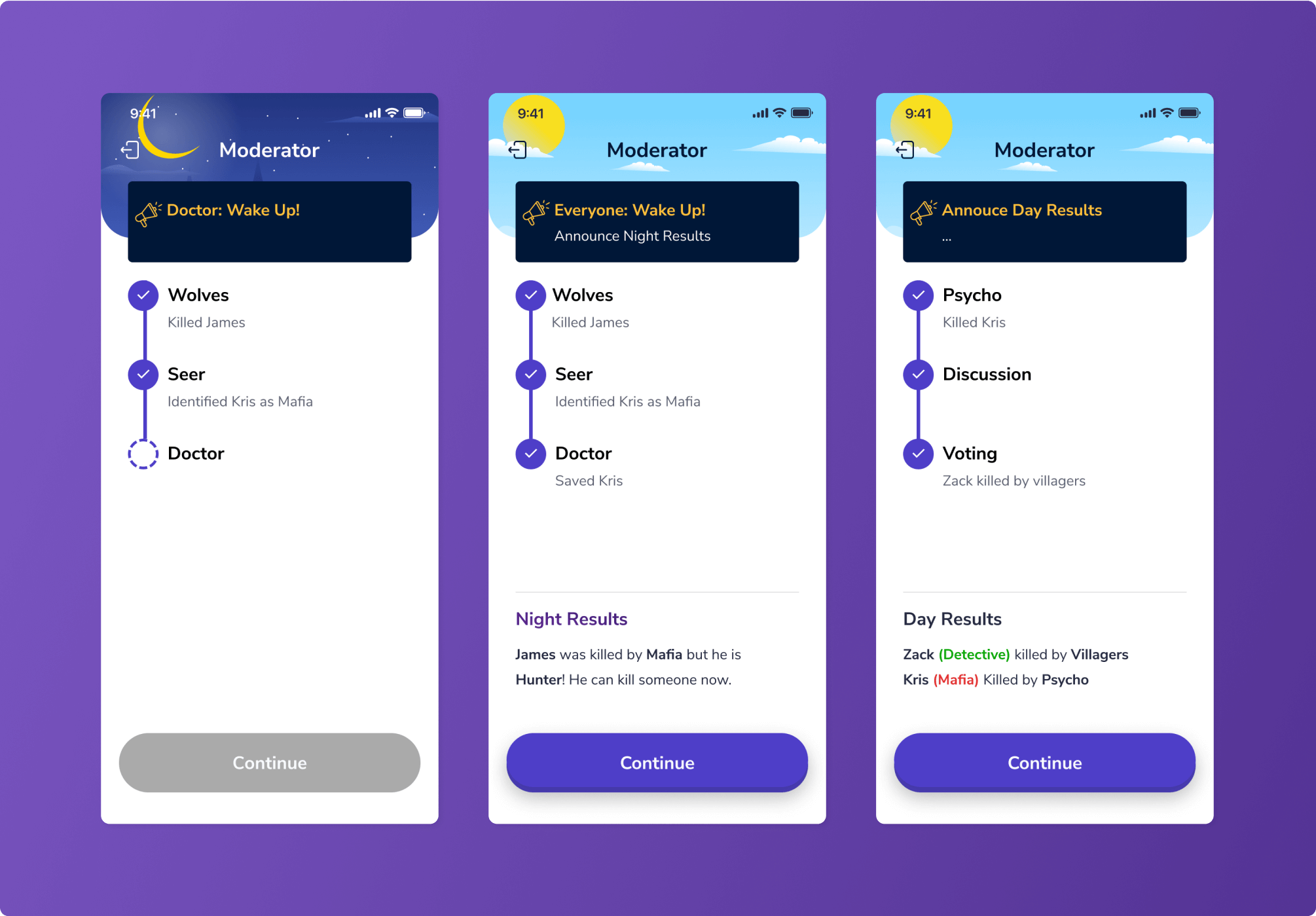

Our client, a US-based game development company, has reached out to us to request mobile development services. They needed to convert 4 board game apps into iOS apps. The games have similar logic and mechanics but slight differences like names of roles and their responsibilities.

The games (Mafia, Werewolf, Resistance, and Avalon) allow friends to play together, sitting in the same room or remotely via an internet connection.

The NERDZ LAB mission was to port native game flows with all variations into the mobile app architecture and ensure resource efficiency at the same time.

SCREENS:

Services

Mobile development

iOS

Technologies:

Swift

Pusher Channels

UnityAds

Apple Subscriptions

Google Subscriptions

Team composition:

1 iOS developer

1 Back-end developer

1 QA engineer

1 Delivery

1 Project manager

1 Fractional CTO

THE CHALLENGE

In the initial phase of working with the client, NERDZ LAB has:

- gathered all requirements

- analyzed all weak points

- examined all games systems entirely

Through these interactions and research, NERDZ LAB has identified the challenges we’ll describe below.

Architecture logic

As these are board games, they have complex sets of rules. Even a human sometimes finds it difficult to understand and calculate all game variations. It takes even more effort to transfer all those rules, logical jumps, and edge-case situations into the code language.

This was of great importance because the architecture is supposed to be appropriately structured, seamlessly scalable, and easily understood.

Live game updates

Since these are online games, any player’s action is supposed to transfer to other players. Additionally, every player’s game flow may react in different ways, depending on their role.

Besides the architectural complexity where the online game should respond to players’ actions, there’s another difficulty: reactions to internet lags and processing game interruptions.

This includes concerns relating to:

- How the games should behave if somebody has already been notified about a completed action (for instance, if the Mafia has made its choice), and the other player still has some game delays;

- How the games should behave if somebody has the internet switched off;

- How the game should behave in Race Condition – when the system should first choose event A (the Mafia has made its step) then event B (the selection of who the Mafia is), but due to the latency of event A delivery, event B has come earlier.

In all these cases, a system should react precisely and allow the players to play the games in the correct order.

Single controlling system

All 4 games have similar mechanics: nobody knows each other’s roles, and you need to unveil the Impostor; meanwhile, the Impostor is supposed to stay alive.

To reflect this unity and avoid duplication of the same logic across the 4 systems (and therefore, code redundancy), we needed to build an architecture that would share one code across all 4 applications. Any change of logic for one game would indirectly influence all four.

At the same time, we had to separate differences from this shared code system so that they wouldn’t be affected by changes made. In this way, the differences should stay unique.

This point is critical for time efficiency and to reduce system errors. It means that we write the code only once, and it’s automatically shared across all 4 games, which:

- cuts time for app development and solution deployment;

- reduces the possibility that in separate applications, the flow works differently.

To reduce complexity across all 4 gaming apps, we implemented the solutions given below.

Building the framework for the games. After holding several meetings with the client and technical experts, we plotted the games with all possible exceptions and variations from different moves. Using this framework, we set up a class architecture that clearly defined the games’ responses to player actions. We built a decision tree to decide how the system should behave in different scenarios and under a wide variety of influences (for instance, the tree would determine that a player previously killed by the Mafia would be healed by the Doctor).

Creating the updates channel. For sending updates among players, we used the Pusher Channels service. This enabled us to send notifications as necessary from one player to the others through our server in real-time. In addition to that, server usage allows for automatic resource expansion. This means that even with a massive workload on the events delivery system, its resources automatically increase, and the main module will continue its work without lags.

Apart from that, to eliminate the constraints on Race Conditions and delays when sending/getting information, we created a purpose-driven queue with priorities that process events in a particular order according to the game’s flow. For a user, it means that no matter what order events occur in, or whether they have severe lags, the operations queue will process everything correctly or wait for a relevant event. Only after handling all events properly will the queue work with those events that took place after a given event.

Developing a shared code application. After numerous discussions on how to reflect the similarities and differences in the code language, we’ve made up the list of application modules and the shared system that would define the flow of these modules.

Next, we outlined similar modules. For these, we used a unique mechanism of code-sharing across applications.

And finally, we established a system that could communicate with a shared application (send and receive the information of these modules). Its main goal is to streamline the workflow between the shared modules and every single application.

THE RESULTS

A thoroughly described, elaborated, and established architecture of applications with relevant logic

Real-time updates and notifications on the games, their statuses, and individual players’ positions

4 different apps on the shared code system

Up to 5 000 active users for each game